AI Bias Example, Meaning And How To Avoid Them

AI has been here for more than 60 years now, The First ever AI was introduced back in the 1950s by Arthur Samuel, it was checker playing AI but the first AI Bias example could not be the before 1970s by Saint Georges Hospital Medical School, a computer program was used to make the admission process farther but it ends up making the worst when It was found discriminating against women and racial minorities. Not to mention it took the whole reputation of the institute.

Another worst example of Ai bias example can be Amazon Rekognition is a cloud-based software as a service. It was created to recognize things from images and videos using reference images it stores in which database. Being software made by AWS itself people didn’t question its reliability. law enforcement around the US started using that for recognizing suspects from the huge database of criminals in seconds.

Things didn’t quite go as the plan when the system end up recognizing 28 members of congress as the criminals who were arrested for various crimes. The critical part was, those who were matched with the criminal records 40% of them were people with colored skin. So now you roughly know AI bias meaning, Now let’s try to understand it more elaborately and why it happen.

AI Bias Meaning

There are more advantages of AI than disadvantages one of it is biases. Humans are many things but not perfect AI programmed and trained by humans may have some issues. A simple AI Bias Meaning can be, an event that occurs when an AI algorithm produces results that are systemically prejudiced, meaning the AI behaves in such a way that it is not supposed to, due to erroneous assumptions in the machine learning process. Here the data structure may seem unbiased the output shows biased results. It is often social intolerance and institutional discrimination.

Why does AI Bias happen?

First Situation

As we before said before the bias may come in form of racial bias or gender prejudices it can be even age discrimination. The underlying problem here more often than not is human prejudice it can be conscious or unconscious both, like for example while training and an algorithm from the process of development to training to deployment, the developer’s mind might have gone through some human prejudices in the backend that can sneak into the AI solution and the system adopts human basis. psychologists believe there are 180 cognitive biases some of which may find their way to influence algorithms.

Here an AI bias example can go like this while training an AI model if the system is not given enough pictures of women while trying to recognize faces it might end up with some kind of gender bias in the algorithm influenced by some unrelated factors.

Second Situation

Another bias can appear when the AI is trained with low-quality data from where the data can skew the output of the system. Here AI bias example can go like this, if a system is trained for recruiting employees in an office and the previous employee’s data are being used for training the system, if most of the employees are men, Ai can develop here gender bias again.

Third Situation

This gender bias system can also be seen while using a natural processing algorithm, it happened to the Google algorithm itself while translating, as it has been getting data from news reports and social media posts, it has developed some biases while translating something gender-neutral to giving it more traditional gender roles translations. Like he does business or she takes care of children even when the translations car coming as some gender natural sentences.

Fourth Situation

While training and ai algorithm if the model is given too much data it can also develop biases. like for example if a model is being trained to recognize safe places in a city and it works by recognizing which place has more reported cases and more criminals are caught,

It might show the safe places as most dangerous. As if somewhere the police patroling is strong and more criminals are caught this won’t be more secure in the eyes of AI compared to the place where crimes are unreported and criminals are not being caught.

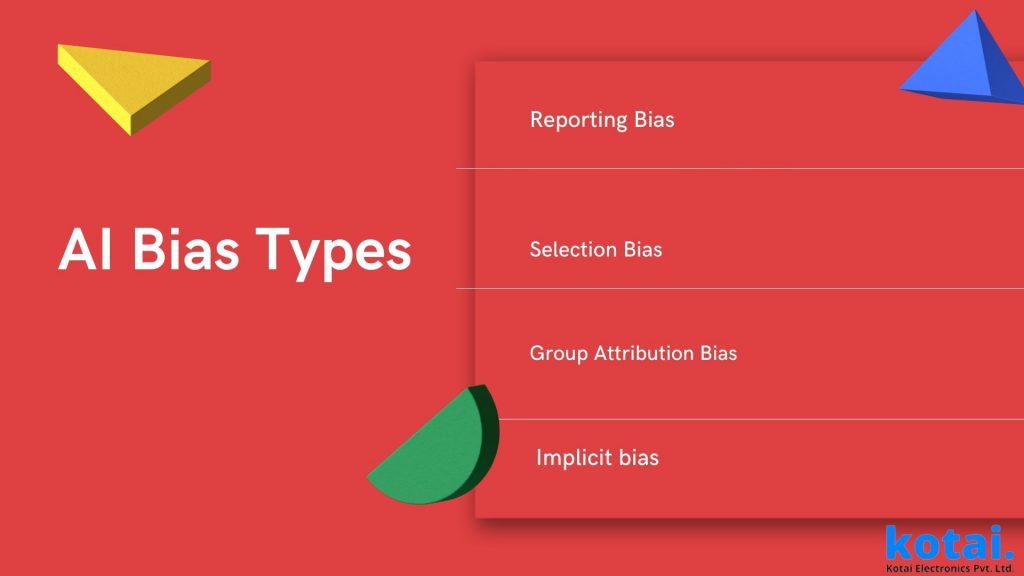

AI Bias Example and Types

Reporting Bias:

it is obvious that an AI algorithm should be trained based on real-life situations as it would actually be used in real-life. Reporting AI bias meaning, when the frequency of events in the training data set does not comply with reality.

This AI bias example can be seen in fraud detection tools while using it in a remote geographic location. It happened because when fraud is found in crowded areas the investigation officers can just check whether it was real or not and give original reports but while it goes to remote geographic locations investigators made sure it was indeed a real fraud case before going there.

So when the reports are submitted the AI sees them as all reports are indeed true therefore making the area look mostly filled with fraudulent customers.

Selection Bias:

when the algorithm does not get proper random data while training selection bias can occur. As we said at the beginning of this article when a part of data is not given proper data this bias happens.

Here is The AI Bias example that can be the true example of the test conducted Joy Buolamwini, Timnit Gebru, and Deborah Raji, where 1270 images of African and European countries parliament members were used to train the algorithm and the system worked well only with males and fair-skinned women as most of the parliament members were male and most women were white.

Group Attribution Bias:

Group attribution AI bias meaning, The Bias that takes place when the algorithm puts more weight onto an individual it happens because the algorithm classifies the data and extrapolates a certain set of data from the rest of the data set. Here the AI bias example can be a tool that is used for admission and recruiting people, Here the system can put more importance on students who graduate from certain universities over others.

Implicit bias:

An example of implicit bias can be influenced by more hand-picked personal experiences of the data scientist instead o the general experiences. This Ai bias example can be something childhood experiences like if the person has gone through cultural cues of women being housekeeping this stereotype will speak to the system and won’t recognize women as someone in more influential roles.

How to Reduce AI Bias In Machine Learning Algorithm

Checking into Deeper

One thing we need to understand is that Some industries are more prone to AI bias and have a previous record of relying on biased systems. Here if we try to be aware of what AI used to suffer in the industry it can help companies improve fairness, and it will be easier to build industry experience.

Designing AI Models Keeping a separated mind

Before actually designing AI algorithms it is suggested that consult humanists and social scientists to check if the system has effected by some human judgment. Also, the model algorithm should be given a certain set of parameters to check if it can it can equally perform well across different age, gender, and racial groups.

The Data For Training Should be Accurate

There should be calculated and researched strict guidelines on how to collect, sample, and preprocess training data. Along with establishing transparent data processes, The training should include internal or external teams to spot discriminatory behaviors and potential sources of AI bias.

Perform testing With A Target In Mind

While testing your models, test AI’s performance across different subgroups to uncover problems that are hidden by different huge numbers of datasets. Also, Out the model through torture test tp check how it performs under heavy numbers of data seats or while doing complex calculations. In addition, continuously retest your models as you gain more real-life data and get feedback from users.

More Human-Like Approach

AI replicated human thinking, ao it’s obvious that it will also perform some of the mistakes that humans does in recession making so if we take more holistic accroach and try to improve human decision-making AI biases will be lesser and lesser.

Trying to Understand The System Better

Why exactly the system is creating biases? how it generates predictions? what are the data pointers responsible for creating certain decisions? Doing this can better understand the origin of biases, therefore, eliminating the biases once in for all

Conclusion

Though AI has more benefits than limitations, still there are some AI bias example that gives the system Achilles heel. You have gone through all the biases possible and we hope you have understood AI bias meaning certain certain numbers of grouped problems working together as one. We hope in the future the whole community will learn from the mistake and as eliminating AI biases are easier said than done, everyone will come across together to find the solutions together. If Ai happened to grab your interest and you want to have one for your own don’t forget to check our services page for a free consultation.

As for now we really you had a good time learning AI bias meaning and Ai Bias example if so please don’t forget to share thank you for reading have a great day.