Object Counting Using YOLO best Practices

Object detection and identification are important in the automation field. Recently, there has been interest in the topic of image processing and automation, which is expanding as more industries use automation. Mechanisms for item detection, identification, and counting are being used by more and more people. Due to shifting consumer demand, as well as the most recent machine learning and deep learning approaches, the object recognition area is now significantly more sensitive. In this paper, a method for object recognition, categorization, and counting based on image classification machine learning approaches is put into practice using Yolo.

What is Object Detection

The identification and localization of items within an image or a video are done using the object detection technique in computer vision.

Bounding boxes, which are rectangular shapes containing the objects, are used in the image localization process to identify the accurate position of one or more items.

This procedure is occasionally mistaken with “image classification” or “image recognition,” which seeks to determine which category or class an image or an object contained inside an image belongs to.

The graphic that follows represents the previous explanation visually. “Person” is the object that was found in the photograph.

What is YOLO

“You Only Look Once” is known by the name “Yolo.” It was made by Ross Girshick, Ali Farhadi, Santosh Divvala, and Joseph Redmon. The Yolo Algorithm finds and identifies a variety of things in the image. Yolo performs object detection as a search problem and returns the class probabilities for the photos that were found to include objects. An algorithmic run that predicts the complete image is performed. There are several variations of the Yolo algorithm, including small Yolo, Yolo V1, V2, V3, V4, V5, and V7. It is popular because of its speed and accuracy.

How Does YOLO Object Detection Work?

Now that you are familiar with the architecture, let’s take a high-level look at how this algorithm handles object detection in the context of a straightforward use case.

“Assume you created a YOLO application that can identify soccer balls and players from a given image.

But how can you describe this procedure to someone, particularly someone who is not familiar with it?

That is the section’s main purpose. You will understand every aspect of YOLO’s object recognition method, including how to create image B from image A.

The algorithm works based on the following four approaches:

- Residual blocks

- Bounding box regression

- Intersection Over Unions or IOU for short

- Non-Maximum Suppression.

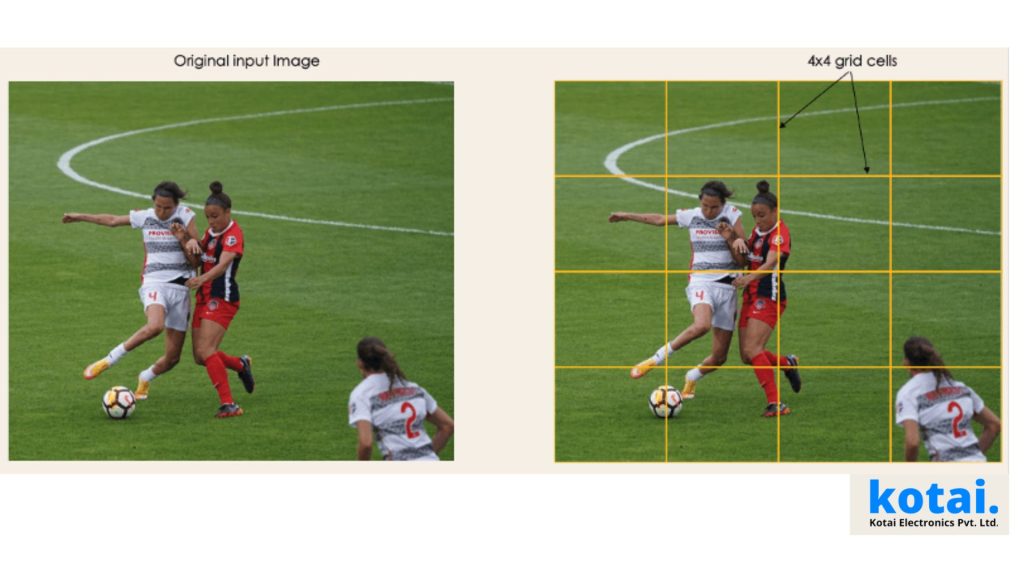

1- Residual blocks

Starting with the original image A, we first divide it into NxN grid cells of equal shape, where N in this case is 4, as seen in the image to the right. The class of the object that each grid cell covers must be predicted locally, together with the probability/confidence value.

2- Bounding box regression

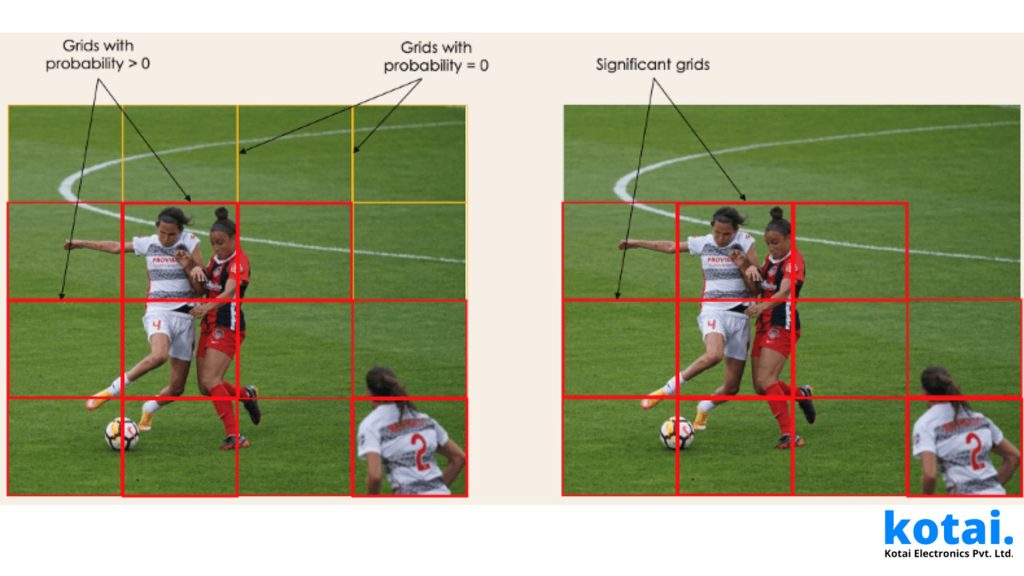

The next step is to identify the bounding boxes, which are rectangles that highlight all of the image’s objects. As many bounding boxes as there are objects in a given image are possible. The properties of these bounding boxes are determined by YOLO using a single regression module, where Y is the final vector representation of each bounding box.

Y = [pc, bx, by, bh, bw, c1, c2]

This is especially important during the training phase of the model.

The probability score of the grid containing an object is represented by pc. For example, every row in a red grid will have a probability value greater than zero. The simplified form is shown on the right since there is no chance that any of the cells will be yellow.

bx, by are the bounding box’s center’s x and y coordinates in relation to the surrounding grid cell. The bounding box’s height and width in relation to the surrounding grid cell are denoted by the letters bh, bw. C1 and C2 stand in for the classes Player and Ball, respectively. If your use case calls for more classes, we can have them.

To understand, let’s pay closer attention to the player on the bottom right.

3- Intersection Over Unions or IOU

Despite not all of them is significant, a single object in an image might frequently have many grid box possibilities for prediction. The IOU (a value between 0 and 1) purpose is to eliminate such grid boxes and retain only the necessary ones. Here is the reasoning for it:

The IOU selection threshold is set by the user and can be, for example, 0.5. The IOU, which is the Intersection Area divided by the Union Area, is then calculated for each grid cell using YOLO. Finally, it considers grid cells with an IOU > threshold rather than those predicted to have an IOU threshold. An example of using the grid selection method on the object in the bottom left is shown below.

4- Non-Max Suppression or NMS

Setting an IOU threshold is not always sufficient because an item may contain numerous boxes with an IOU that exceeds the threshold, and leaving all of those boxes open could result in the inclusion of noise. Here, NMS can be used to keep only the boxes with the highest probability of being discovered.

Applications of YOLO

YOLO algorithm can be applied in the following fields:

Driving autonomously: This algorithm can be used in autonomous vehicles to identify nearby items like other automobiles, pedestrians, and parking signals. Since there is no human driver operating the automobile, object detection is done in autonomous vehicles to prevent collisions.

Wildlife: Different kinds of animals are found in forests using this method. Journalists and wildlife rangers both utilize this form of detection to locate animals in still photos and films, both recorded and live. Giraffes, elephants, and bears are a few of the creatures that can be spotted.

Security: In order to impose security in a location, YOLO can also be employed in security systems. Assume that a particular place has security restrictions prohibiting individuals from entering there. The YOLO algorithm will identify anyone who enters the restricted area, prompting the security staff to take additional action.

This algorithm is used to detect various types of animals in forests. This

Yolo Object counting

Yolo’s inference appears to be accurate. Now, I believe, is the time to begin developing our unique object-counting method. We must completely understand how the detect.py file works in order to develop our function.

A section of detect.py provides information about the discovered objects.

If we print “n” and “s” we got:

n is the number of detected classes.

(det[:,-1]==c).sum()

Here, “c” stands for the detection class’s argmax. I have 80 classes, and I’m using the Coco dataset in this case. Following the above line’s output, which provides us with the class index, we can use the following to obtain the class name by index:

names[int(c)]

The name and quantity of classes will be stored in my function. Using a dictionary, we can constantly store both. Finally, let’s make a dictionary:

I can now add class names and their detection rates to my dictionary.

I believe we have what we asked for. The time has finally come to write a function. As I start the code, I am scrolling up to the page. We shall develop our function before the def detection.

I’m now printing our detection rates to the screen using im0 and my found class dictionary. I’m going to align my texts on the screen using “align=im0.shape,” and I’ve decided to do that at the right bottom. It will appear beautiful.

I’m going to select each word from my dictionary one at a time in that for-loop before allocating my class and their numbers to “a.” The text that contains our numbers and their classes is displayed on the screen as the final stage.

The values are arranged by (align right,(int(align bottom)) in the cv2.putText method. To avoid any misunderstanding on the screen, we also need to change the alignment at the bottom, because if we don’t, all the lines will be up against one another.

Beautiful:D We are now ready to go. Using the count function on a def detect function comes last.

Let’s see how it’s a good working

It performs nicely. Completed! An object counter is now present for Yolov7. Any image or video can be used with this counter.

Conclusion

The most advanced real-time object detection method is YOLO since it is faster than previous algorithms while maintaining a high level of accuracy. Although the YOLO network is capable of understanding the universal type of product, the accuracy is limited for nearby and smaller objects due to positional limits.

We now have a general understanding of the YOLO algorithm and object detection. The main made on the basis of the YOLO algorithm has been discussed. The YOLO algorithm’s operation is now clear to us.